Sommaire

Points essentiels

- Pigment applique l’IA à l’ensemble des équipes internes et constitue une bibliothèque de cas d’usage internes de l’IA avec des détails de mise en œuvre et des enseignements tirés.

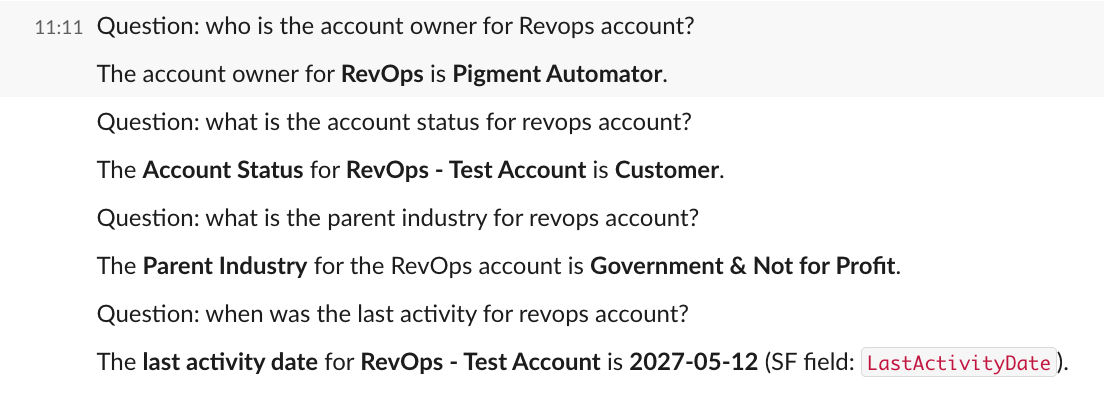

- Un bot Slack permet aux équipes GTM d’interroger Salesforce en langage naturel, économisant environ 3 à 5 minutes par recherche tout en réduisant les changements de contexte et les allers-retours internes.

- Le bot Salesforce normalise les noms d’entreprise, classe les comptes pertinents, renvoie un meilleur résultat correspondant unique, et vérifie l’autorisation de l’utilisateur avant d’exposer les données du compte dans Slack.

- Dans l’ensemble de l’organisation R&D de Pigment, les outils d’ingénierie alimentés par l’IA font gagner aux ingénieurs plus de 2 heures par jour jusqu’à plusieurs jours par semaine et accélèrent le développement de fonctionnalités et la refactorisation.

- Dans Gong, des scorecards commerciales automatisées analysent les transcriptions d’appels pour mettre en évidence les priorités de coaching, évaluer la qualification et l’exécution des réunions, et faire remonter les meilleurs appels dans Slack.

- Un Google Apps Script mensuel généré via ChatGPT automatise le suivi des réunions d’analystes depuis Google Calendar vers Sheets, économisant des heures de travail manuel répétitif.

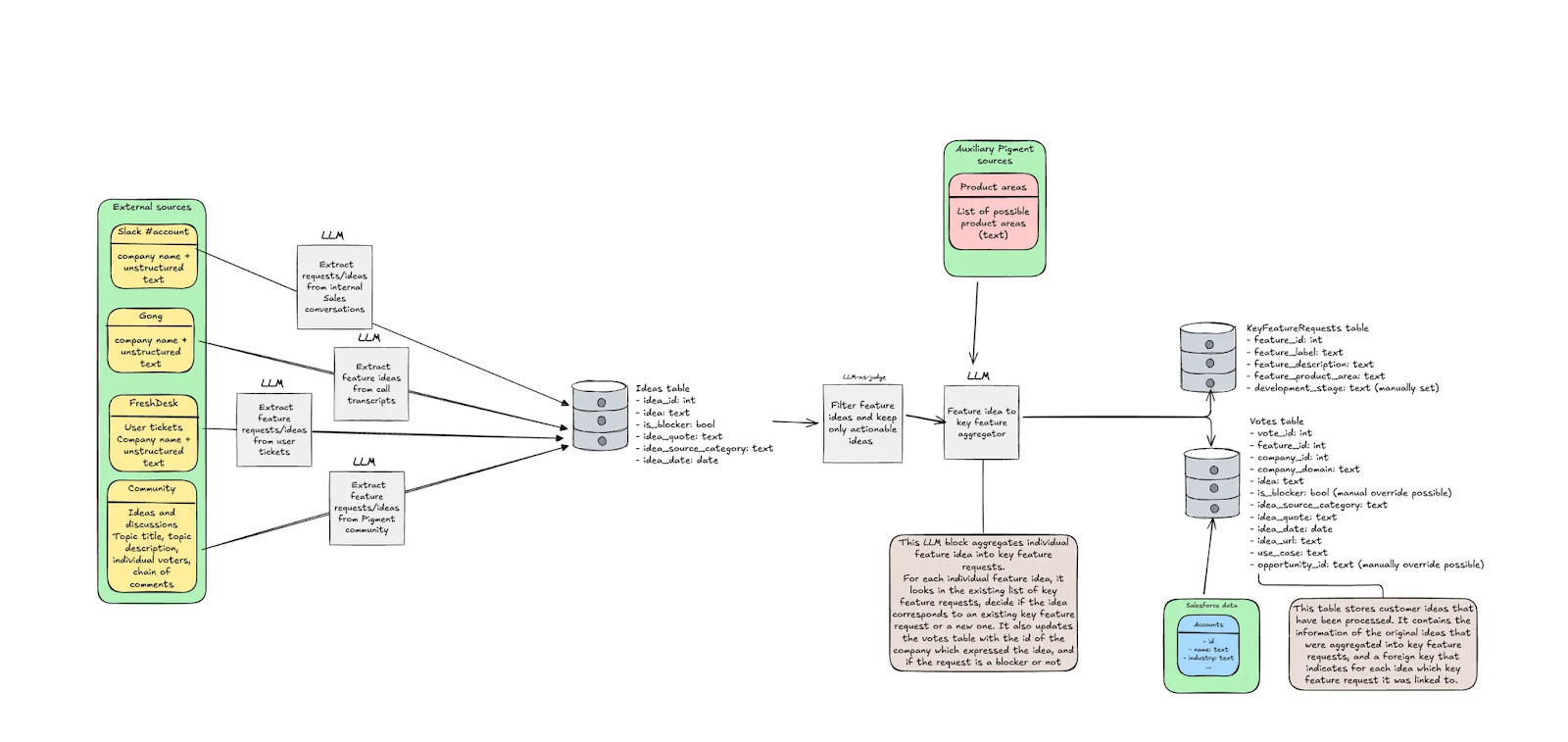

- L’Agent d’idées client extrait et regroupe les retours de quatre sources, les enrichit avec des données CRM, et associe les idées aux opportunités commerciales actives pour un impact sur le chiffre d’affaires quantifié.

Dernière mise à jour : 24 février

Pigment est une organisation résolument tournée vers l’IA. Cette orientation se reflète dans notre stratégie produit, mais aussi dans la manière dont nos équipes internes opèrent au quotidien.

Chaque équipe chez Pigment délivre aujourd’hui davantage que l’an dernier grâce à l’IA. Cela a nécessité de nombreuses expérimentations, et ce n’est qu’un début.

Dans cette logique, nous lançons une bibliothèque de cas d’usage IA internes, détaillant leur conception et les enseignements clés, afin d’inspirer et d’accompagner d’autres organisations dans leurs propres démarches.

Salesforce dans votre poche

Teo Leventhal, Growth Analyst

De quoi s’agit-il ?

Au sein de l’équipe Growth, nous avons développé un bot Slack permettant aux équipes GTM (Go To Market) d’interroger Salesforce en langage naturel et d’obtenir, directement dans Slack, des informations fiables et actualisées sur les comptes.

Cette solution est particulièrement utile pour les équipes commerciales et les dirigeants, souvent en déplacement et sans accès immédiat à leur ordinateur ou à l’application Salesforce. Le plugin Slack permet de répondre aux questions courantes et d’accéder à tout type d’information stockée dans Salesforce, par exemple :

- Qui est en charge du compte X ?

- Quel est le statut de l’opportunité Y ?

- Le contact Z a-t-il été engagé ?

- Le client N a-t-il signé un accord marketing ?

Pour garantir un fonctionnement fluide, le bot normalise les noms d’entreprise, identifie le compte Salesforce le plus pertinent, extrait les données demandées et fournit une réponse claire et structurée, tout en vérifiant que l’utilisateur dispose des droits d’accès nécessaires Salesforce.

Bénéfices

Les principaux bénéfices sont un accès simplifié à l’information, une réduction des changements de contexte, moins d’interruptions internes et un usage renforcé de Salesforce comme source unique de référence.

En traitant des requêtes imprécises et en classant les comptes par pertinence, la solution limite également les erreurs liées aux doublons ou aux incohérences de taxonomie.

Avant la mise en place de cette automatisation, les utilisateurs s’appuyaient sur des recherches manuelles dans Salesforce ou sur des échanges répétés dans Slack avec différentes équipes (Customer Success, Sales Ops ou entre commerciaux) pour obtenir des informations basiques sur un compte.

Nous estimons un gain de 3 à 5 minutes par requête. Pour la plupart des utilisateurs, cela se produit plusieurs fois par jour. Au-delà du temps gagné, l’accès immédiat à l’information au moment opportun est un levier opérationnel majeur.

Enseignements et limites

Bien qu’efficace, le bot présente encore certaines limites, notamment en matière de temps de réponse, de nombre de champs pris en charge, de gestion des cas d’ambiguïté, ainsi que dans le fait qu’il ne renvoie actuellement qu’un seul compte correspondant le mieux à la requête.

Les évolutions à venir porteront sur l’amélioration des performances, l’élargissement des champs disponibles, des interactions de suivi plus riches et la mise à disposition d’insights plus proactifs sur les comptes.

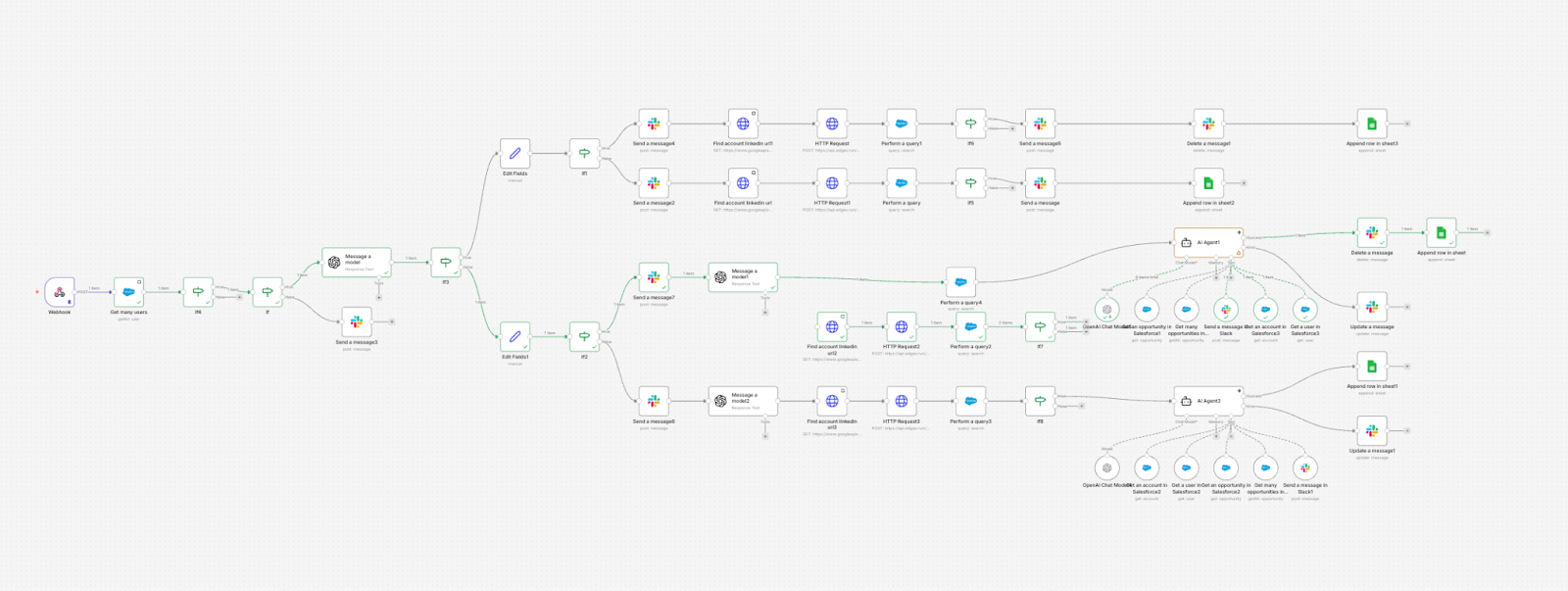

Outils utilisés : Slack, n8n, ChatGPT, Salesforce, Google Sheets (pour le monitoring et l’analyse).

IA au service de la R&D

Virginie Jugie, Engineering Program Manager Lead

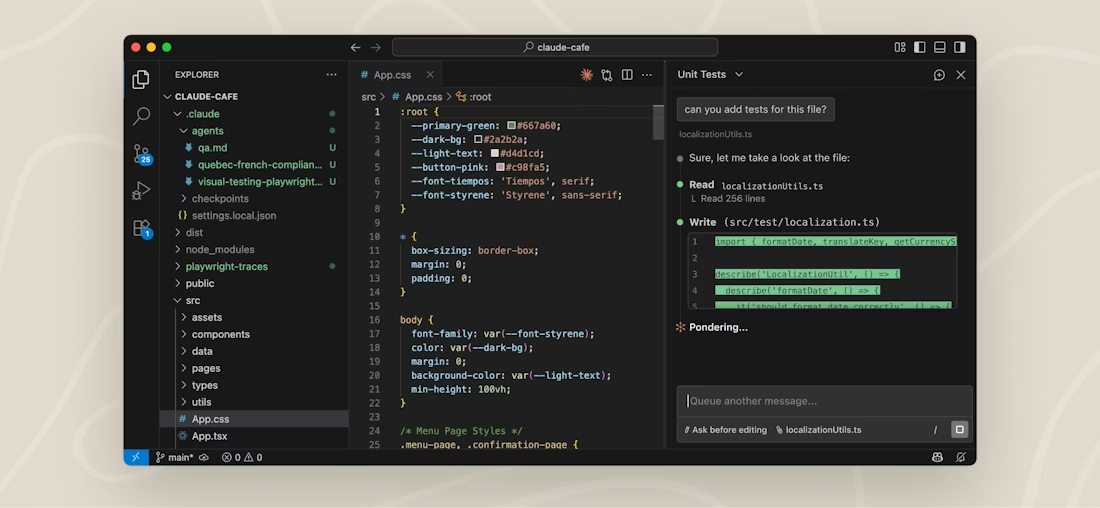

Au cours de l’année écoulée, Pigment a déployé des outils de développement pilotés par l’IA pour l’ensemble de l’organisation R&D : assistants de programmation basés sur l’IA, ainsi que des outils spécialisés pour la gestion des incidents (incident.io), la documentation technique via l’API OpenAI, et les revues de code (Auggie, Bugbot, Copilot, Claude Code).

Bénéfices

Le projet s’est révélé être un succès indéniable à ce stade :

- Les ingénieurs déclarent gagner plus de deux heures par jour, voire plusieurs jours par semaine.

- Les cycles de développement sont accélérés, tant pour la livraison de nouvelles fonctionnalités que pour les travaux de refactoring et de maintenance du code.

- La démocratisation de la connaissance grâce à l’IA facilite l’exploration et la compréhension de notre base de code. À plus long terme, cela permet d’accélérer l’onboarding des nouveaux collaborateurs, même sur des environnements techniques complexes.

Enseignements et limites

Les outils de développement basés sur l’IA constituent une évolution majeure par rapport aux modes de travail traditionnels. Afin de garantir une adoption efficace, nous déployons des formations adaptées. Pour chaque outil, nous proposons des démonstrations ainsi que des sessions de formation fondées sur des cas d’usage réels. En parallèle, les développeurs échangent activement entre eux et documentent les bonnes pratiques identifiées.

Dans la mesure où nous expérimentons plusieurs outils, chacun répondant à des besoins spécifiques et l’écosystème évoluant rapidement, nous souhaitons conserver une approche ouverte. Il est alors inévitable de faire face à des enjeux de fragmentation des solutions et de gestion des licences. Par ailleurs, il demeure complexe de mesurer précisément les gains de productivité au-delà des données déclaratives des utilisateurs.

Prochaines étapes :

- Création de playbooks et de lignes directrices spécifiques selon les tâches et les rôles au sein de l’équipe.

- Finalisation de notre dispositif de suivi des usages de l’IA, afin de consolider et d’optimiser nos dépenses en licences.

- Formalisation d’un cadre de benchmarking quantitatif permettant d’évaluer objectivement les outils de développement basés sur l’IA.

Nous disposons aujourd’hui d’éléments suffisants pour affirmer que le développement assisté par l’IA génère une valeur tangible. La prochaine étape consiste à structurer des cadres et des référentiels clairs pour mesurer son impact et améliorer en continu nos pratiques.

Outils utilisés : Cursor, GitHub Copilot, Augment, Claude Code, OpenAI.

Tableau de bord commercial

Guy Solomon, Revenue Enablement Specialist

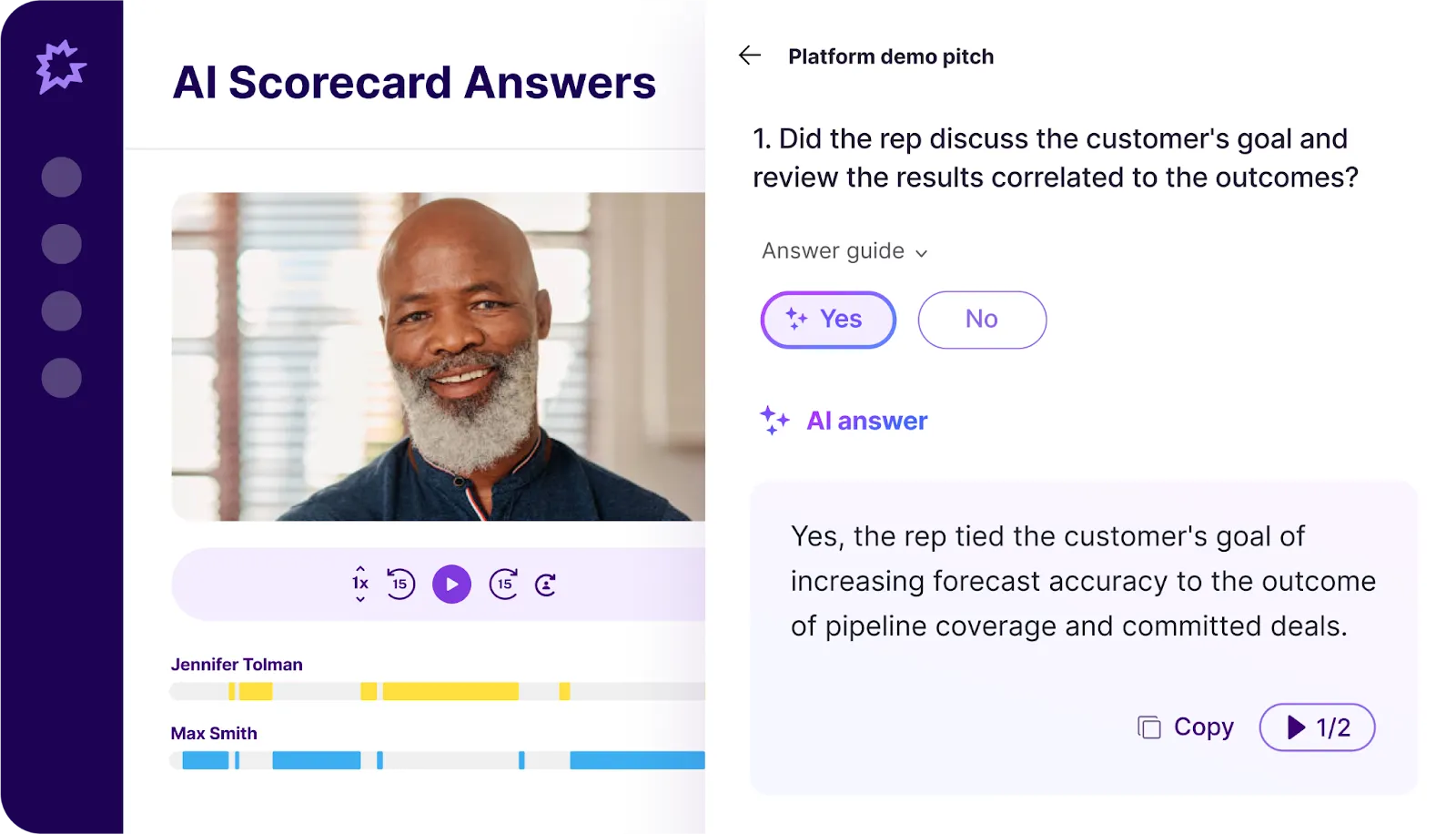

Au sein de Gong, nous avons configuré des scorecards qui s’exécutent automatiquement sur les appels réalisés par les équipes commerciales de Pigment.

Bénéfices

Nous sommes désormais en mesure d’orienter les managers sur les axes prioritaires de coaching, en nous appuyant sur les transcriptions des conversations et les tendances identifiées à travers les interactions clients.

Par exemple, nous pouvons évaluer la qualité de la qualification des opportunités par les BDR avant leur transmission, ou mesurer dans quelle mesure les commerciaux respectent les meilleures pratiques lors de leurs rendez-vous.

Les appels obtenant les meilleurs scores sont automatiquement partagés dans les canaux Slack concernés, afin de valoriser les succès et de fournir des références concrètes de ce que représente un standard d’excellence, notamment dans une perspective d’onboarding.

Enseignements et limites

Au fil du temps, nous verrons où se situent les lacunes dans l'exécution de toutes les interactions avec la clientèle, en fonction des tableaux de bord que nous choisirons de créer dans Gong..

Outils utilisés : Gong, Slack

Automatisation du suivi des réunions

Ed Gromann, Global Head of Analyst Relations

Au quotidien, je dois consigner dans un Google Sheet les échanges avec les analystes : participants aux événements, contenus abordés, actions de suivi.

En utilisant Prompt Cowboy, j’ai conçu un prompt pour ChatGPT, qui a généré un Google Apps Script exécuté automatiquement le 1er de chaque mois.

Bénéfices

Cela impliquait auparavant un volume important de tâches manuelles répétitives chaque mois : saisir dans le tableur les détails de chaque réunion à partir de mon agenda.

Enseignements et limites

Le script récupère certaines informations non nécessaires. Une optimisation est prévue pour le rendre plus sélectif.

Outils utilisés : Prompt Cowboy, ChatGPT, Google Apps Script, Google Calendar

Agent d’Idées Clients

Lea Benyamin, Data Automation Engineer

Le Customer Idea Agent (CIA) s’appuie sur un pipeline d’agents LLM personnalisés afin d’extraire, centraliser et quantifier automatiquement les demandes d’évolution et de fonctionnalités formulées par nos clients et prospects à travers l’ensemble de notre écosystème de feedback :

Le Customer Idea Agent exploite une chaîne d’agents LLM personnalisés pour extraire, centraliser et quantifier automatiquement les demandes d’évolution produit et de fonctionnalités formulées par nos clients et prospects via :

- Idées partagées sur la Pigment Community

- Messages Slack

- Tickets Freshdesk

- Transcriptions d’appels Gong

Cette approche nous permet d’exploiter, à grande échelle, des signaux fondés sur la donnée pour prioriser le développement produit de manière structurée et objective.

Bénéfices

Notre pipeline LLM extrait et regroupe les idées et demandes de fonctionnalités, puis les enrichit avec des données issues de notre CRM. Cette approche nous permet d’analyser les retours produit selon différents axes : cas d’usage client, localisation, secteur d’activité ou segment.

Nous sommes ainsi en mesure d’identifier et de suivre des signaux d’intérêt structurés, permettant aux équipes Product Management et GTM (Go To Market) de repérer les fonctionnalités présentant le plus fort impact business.

Point clé : nous établissons un lien direct entre ces idées et les opportunités commerciales actives. Notre roadmap s’appuie ainsi sur une évaluation quantifiée de l’impact revenu, ce qui nous permet de prioriser les développements en cohérence à la fois avec les besoins clients et nos objectifs business.

Enseignements et limites

Bénéfices de l’intégration de Vertex AI avec BigQuery

L’un des avantages techniques majeurs a été l’exploitation directe de Vertex AI au sein de nos workflows BigQuery. Cette intégration a supprimé les flux complexes de transfert de données entre systèmes et nous a permis d’appliquer les capacités LLM directement là où résident nos données. En centralisant l’ensemble du dispositif dans l’écosystème BigQuery, nous avons obtenu des temps de traitement plus rapides, une latence réduite et une architecture simplifiée.

Cette intégration native garantit également une scalabilité fluide tout en maintenant un haut niveau de gouvernance et de sécurité des données au sein de notre infrastructure data warehouse existante.

Le rôle critique de la modélisation et de la qualité des données

Même le LLM le plus performant reste tributaire de la qualité des données qu’il traite. L’exploitation de notre pipeline de data modeling existant s’est révélée déterminante pour obtenir des résultats exploitables. Notre pipeline standardise les feedbacks issus de sources hétérogènes, chacune nécessitant un prétraitement rigoureux afin de produire des données cohérentes et de haute qualité.

Défis persistants

L’évaluation de la qualité des outputs LLM demeure un enjeu. Bien que nous ayons intégré un agent “LLM-as-judge” dans notre pipeline pour détecter et filtrer les inexactitudes, une revue humaine reste indispensable pour garantir la fiabilité des résultats.

Nous avons mis en place des audits qualité dans lesquels les Product Managers examinent les idées regroupées afin de valider leur pertinence et d’identifier les cas limites.

Les LLM peuvent ponctuellement mal interpréter un contexte ou fusionner des idées sans lien réel. Si nos algorithmes de clustering affichent globalement de bonnes performances, ils nécessitent un ajustement et un suivi continus.

Nous avons également déployé des mécanismes de feedback permettant aux utilisateurs de signaler les catégorisations incorrectes. Ces retours alimentent un processus d’amélioration continue de nos prompts et de nos modèles.

Outils utilisés : Vertex AI, BigQuery, dbt Core, Gemini.

.jpeg)